Nudging Towards Better Software

Aspires to… "Be Net Positive. See Net Positive."

Helping teams deliver as one in data engineering and solution architecture.

Involved in IT with roles that include solutions architect, data architect, tech lead, developer.

I am also into Personal Finance, EVs, Tennis, Home Automation, Photography, and maybe Gardening.

If you aim to create better software through a deeper understanding of how our mind works, you're in the right place. Recent developments in behavioral science have shown that we may not be as rational as we think. Our cognitive biases show up in so many creative human endeavors and building software is no exception. This post works off some key ideas from Nudge: The Final Edition by Richard H. Thaler, Cass R. Sunstein, and explores those that could improve the software we make.

What is a Nudge?

A nudge is any mechanism that encourages one or more choices without disallowing other options.

Similarly, instead of encouraging, a nudge could also be used to discourage choices and is more affectionately called a sludge.

The book defines it as follows:

A nudge, as we will use the term, is any aspect of the choice architecture that alters people's behavior in a predictable way without forbidding any options or significantly changing their economic incentives. To count as a mere nudge, the intervention must be easy and cheap to avoid. Nudges are not taxes, fines, subsidies, bans, or mandates. Putting the fruit at eye level counts as a nudge. Banning junk food does not.

Why Nudge?

The concept of a nudge appeals to me for a couple of reasons. First, the target audience can still choose what would otherwise have been a disallowed option, letting one's decision-making process play out. This enhances one's sense of agency, encourages cooperation, and minimizes pushback that comes when one feels cornered, a phenomenon called reactance.

Secondly, it allows for graceful experimentation by observing the choices being made while the nudge is in place and make adjustments given the results. If other options are disallowed, then we lose the ability to measure how many such options would have been chosen, removing an important data point that could drive future improvements. While we have the confidence to nudge a certain way, it is equally important to have curiosity and intellectual humility in case the data points elsewhere.

We are all choice architects within the context of our roles in IT, as a technology manager, product owner, architect, designer, developer, tester, tech writer, etc. We are creating nudges whether or not we intended to do so. What counts as a nudge is the effect on the audience, not so much on the intent of the choice architect.

My goal with this post is to help increase awareness of nudging so it becomes more intuitive to the IT practitioner.

Let's explore nudges that will help improve the software we build.

Specify Defaults

Specify defaults whenever feasible. Defaults are one of the most subtle and nonetheless influential nudges at an IT practitioner's disposal. Whether you are crafting user interfaces, service APIs, stored procedures, db tables, or CLI tools, providing defaults has the following benefits:

It nudges the user to the desired outcome,

It makes the artifact easier to use,

It enhances flexibility by handling the occasional scenario that requires values beyond the default.

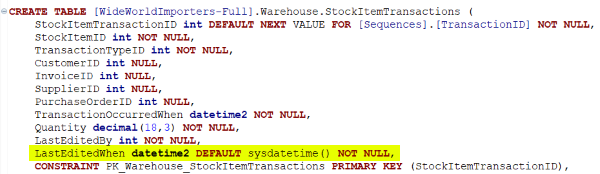

Here are a couple of simple examples. In defining the schema of your tables, adding defaults to usual audit fields like LastUserID, LastUpdateDate with values defined from the session context simplifies their use, and automatically provides more context to the dataset. The caller does not need to pass values to those fields in INSERT, UPDATE statements.

In another case, you provide a getLastestTransactions(rows) API which is used mostly in scenarios requiring at most 50 rows. You want to encourage limiting the rows returned to 50 rows, for performance reasons. And for other cases, the API can be called specifying a value to the rows parameter, making the API more flexible.

In more nuanced cases, I've used default parameters to support the existing contract of the interface while allowing for new (e.g. version 2) alternate behaviors by adding an optional parameter. The default value supports the original behavior, while the supplied value to the added parameter would support the new behavior.

The agreement with existing consumers is preserved, while an enhanced contract is enabled. This practice extends the life of the interface, enabling code reuse and flexibility.

If you’ve purchased a drink or any kind of merchandise from a counter with a newer cash register, you would have encountered one of the most common examples of leveraging the default. Usually, the prominent options suggest various tip amounts, and unsurprisingly, the last item is the “No Tip” option in a much smaller font.

Provide Next Steps

Provide actionable next steps to the consumer of the error response. If the next step for the consumer is to review the documentation on possible causes, or contact another team downstream, then say so in the error response. This specificity may be in the form of an error instance-specific GUID that serves as a common reference for both the user and the team. It could also contain a link to online documentation and a contact email or phone number.

By not providing the actionable message, the nudge to the recipient is to contact your team for support. It's hard to argue that such interactions are a good use of anyone's time. Centralized teams supporting enterprise APIs could easily get overwhelmed by these random requests, stealing precious time from feature development.

Pave The Way

Make it easier for your target consumers to effect the change you are promoting, whether that is using a new API or moving from on-premise to the cloud. Target consumers may have various timelines and motivations in adopting the proposed change, so nudges may be used to move the process along.

And how does one learn the nudges to apply in context? One mental hack I use quite a bit is to play pretend and be your consumer. The current industry term is dogfooding. And one very concrete way to dogfood in the IT business is to build the deliverable that you think your consumer will build. Usually, this is in the venerable sample code that uses the promoted API or sample application built with the new architecture. Doing so will ensure taking the consumer's perspective, enhancing empathy, and providing insight into very real ways to 'pave the way'.

For example, if you are promoting the use of your APIs, are you providing sample code that is regularly updated and verified to be working? Do you know how long it takes a developer from registration to working sample code? And if it is longer than expected, what are the hiccups along the way?

At the enterprise architecture level, let's say there is a decision to adopt a specific cloud platform. Are there sample applications, reference architectures that show how the specific cloud services would be used within the enterprise and integrate with the other existing enterprise components? What other gates need to be cleared for the application team to be able to use the specific cloud service in their solution?

Carefully Categorize

Be aware of the biases that may be triggered by creating categories, labels, and lists, such as when doing data modeling, service partitioning, and UX design. Leverage these biases to nudge while avoiding any unintended consequences.

First, the paradox of choice states that having too many choices can have the effect of delaying the choice. That study broke the myth that having more choices is always better. And we all know that having too few choices is also suboptimal. As an example, let’s say the topic is about how one sees the weather. Would these 2 options be enough: 1) good 2) bad? Or should we offer more like this: 1) good 2) neutral 3) bad. These decisions will be context-specific, but a guideline would be to just have enough categories to get to the desired granularity.

Second, the partition dependence bias says that our choices are influenced by the way items are grouped into categories. And closely related is the diversification bias which says that we tend to apply equal weight to each choice when equal weighting may not always be appropriate.

Partition dependence commonly shows up when we define hierarchies such as the all-too-common repository folder structure. It is such a habit now to assume that the folders are by file type (HTML/CSS/JS vs Java vs SQL). This starting layout using file type might prime the team to focus more on the technical structure of the application, and deemphasizes other ways to think about the code, such as by their business function.

We see this friction when we have to jump around so many folders just to solve for one use case. Generally, working by use case would make for a more business-centric starting point. However, there are quite a few factors that can sway the choice, and a healthy debate usually ensues, but what's key is to be thoughtful about the choice, and communicate the motivations to encourage alignment.

Use Sludge for Good

Use sludge to discourage behavior that is not in the users' best interest. If that API is being deprecated, then gracefully dial down support for it with measures such as an announced end date. As a consumer of that API, unless there is some incentive to switch, with all the other priorities going on, that sludge can help get them going in the right direction.

Security is an area that is rife with the constant need to balance lowering friction and incorporating sludge for good. Providing an API that does not require any authentication is a big nudge toward its use, but the scenarios where that makes sense are more the exception. One recent favorable trend is the use of social logins for authentication, like using your Google ID to authenticate with some other website. It nudges users to register and use the site as they don't need to deal with another user id while still being secure.

Be mindful of Behavioral IT

My journey into this rabbit hole started about a decade ago in a team meeting and the director referenced this concept called Conway's Law. Conways’ Law states that in IT, the systems we build and deploy are strongly influenced by the communication patterns and structures within the organization, such as the org chart. As an architect whose job description includes seeing what is (current state) and what can be (future state), this was a game changer. From that point onward, I could not see IT architecture the same way again.

The bits and bytes of tech can only go so far. For tech to be more effective, we need to better understand the creator and beneficiary of this tech, the human being, and specifically how that mind works. There is a whole domain to explore, the intersection of behavioral science and IT, aka - Behavioral IT. This intersection is wide, so I'd focus on the effect of these cognitive biases in our IT deliverables, whether in a table schema or an enterprise architecture roadmap.

Researchers have noted that there is a gap in our understanding of how cognitive biases show up in IT deliverables. And like me, I hope you are curious to explore it.

Summary

We have explored ways nudging can improve the software we build, from the tables and APIs we provide, the authentication and architectures we enable, to the behaviors of our end users.

We’ve touched on

specifying defaults,

providing next steps,

paving the way,

carefully categorizing,

using sludge for good.

My goal with this post is to introduce these ideas and have them be part of how you show up in your IT work. Regardless of your role in IT, we are all choice architects with opportunities to nudge. How do you plan to nudge towards better software today?

Acknowledgments

Thanks to Mike and Tessa, for helping shape this post.

References

Aquino, A. (2017, June 16). Mind The Gap. https://www.flickr.com/photos/alexaquino/52739297608/in/dateposted/

Wikipedia contributors. (2023, March 9). Behavioural sciences. Wikipedia. https://en.wikipedia.org/wiki/Behavioural_sciences

Giang, V. (2012, June 14). 12 Ways That People Behave Irrationally. Business Insider. https://www.businessinsider.com/predictably-irrational-2012-6#we-are-more-willing-to-do-things-for-free-than-if-wed-gotten-paid-for-it-5

Thaler, R. H., & Sunstein, C. R. (2021). Nudge: The Final Edition - Penguin Random House. https://sites.prh.com/nudgethefinaledition

Sludge - The Decision Lab. (n.d.). The Decision Lab. https://thedecisionlab.com/reference-guide/psychology/sludge

Wikipedia contributors. (2023a, March 8). Reactance (psychology). Wikipedia. https://en.wikipedia.org/wiki/Reactance_(psychology)

Resnick, B. (2019, January 4). Intellectual humility: the importance of knowing you might be wrong. Vox. https://www.vox.com/science-and-health/2019/1/4/17989224/intellectual-humility-explained-psychology-replication

Wikipedia contributors. (2023a, January 11). Choice architecture. Wikipedia. https://en.wikipedia.org/wiki/Choice_architecture

File:Square Stand POS.jpg - Wikimedia Commons. (2015, January 16). https://commons.wikimedia.org/wiki/File:Square_Stand_POS.jpg

Wikipedia contributors. (2023a, January 8). The Paradox of Choice. Wikipedia. https://en.wikipedia.org/wiki/The_Paradox_of_Choice

N. (2022, May 20). Cognitive Bias: Partition Dependence Bias. Neuroprofiler. https://neuroprofiler.com/en/cognitive-bias-partition-dependence-bias/

Naive Allocation - The Decision Lab. (n.d.). The Decision Lab. https://thedecisionlab.com/biases/naive-allocation

Wikipedia contributors. (2022, August 7). Social login. Wikipedia. https://en.wikipedia.org/wiki/Social_login

Overview. (n.d.). Google Developers. https://developers.google.com/identity/gsi/web/guides/overview

Kamble, P. (2019). Behavioral IT® - A Multi-disciplinary Approach to Address the IT Woes of Businesses & Top Professionals in an IT-Driven World. Social Science Research Network. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3715332

Fleischmann, M., Amirpur, M., Benlian, A., & Hess, T. (2014). Cognitive Biases in Information Systems Research: A Scientometric Analysis. European Conference on Information Systems. https://aisel.aisnet.org/cgi/viewcontent.cgi?article=1053&context=ecis2014

Wikipedia contributors. (2022b, November 19). Conway’s law. Wikipedia. https://en.wikipedia.org/wiki/Conway%27s_law